Proactive Robot Learners that Ask for Help

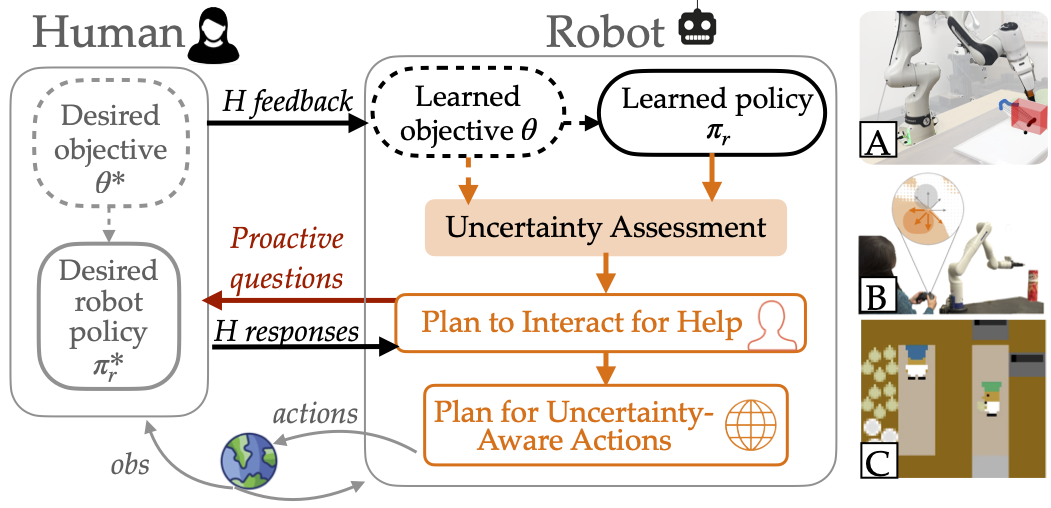

While today’s robot learning algorithms increasingly enable people to teach robots via diverse forms of feedback (e.g., demonstration, language, etc.), they place the burden of responsibility on the human to perfectly understand what the robot doesn’t know and provide the “right” data. We’re working on ways in which instead, robots should be proactive participants---they should bear some of the burden of knowing when they don’t know and should ask for targeted help. We want to provide the robot a self-assessment of uncertainty, calibrated to human feedback received online, enabling robots to ask for strategic help online via natural language.

Questions can be directed to Michelle.

Relevant publications

Coordination with Humans via Strategy Matching.

Michelle Zhao, Reid Simmons, Henny Admoni. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). 2022. pdf

The Role of Adaptation in Collective Human-AI Teaming.

Michelle Zhao, Fade Eadeh, Thuy-Ngoc Nguyen, Pranav Gupta, Henny Admoni, Cleotide Gonzalez, Anita Williams Woolley. Topics in Cognitive Science. 2022. pdf

Teaching agents to understand teamwork: Evaluating and predicting collective intelligence as a latent variable via Hidden Markov Models.

Michelle Zhao, Fade Eadeh, Thuy-Ngoc Nguyen, Pranav Gupta, Henny Admoni, Cleotide Gonzalez, Anita Williams Woolley. Computers in Human Behavior. 2022. pdf

Adapting Language Complexity for AI-Based Assistance.

Michelle Zhao, Reid Simmons, Henny Admoni. Workshop on Lifelong Learning and Personalization in Long-Term Human-Robot Interaction at HRI 2021. 2021. pdf

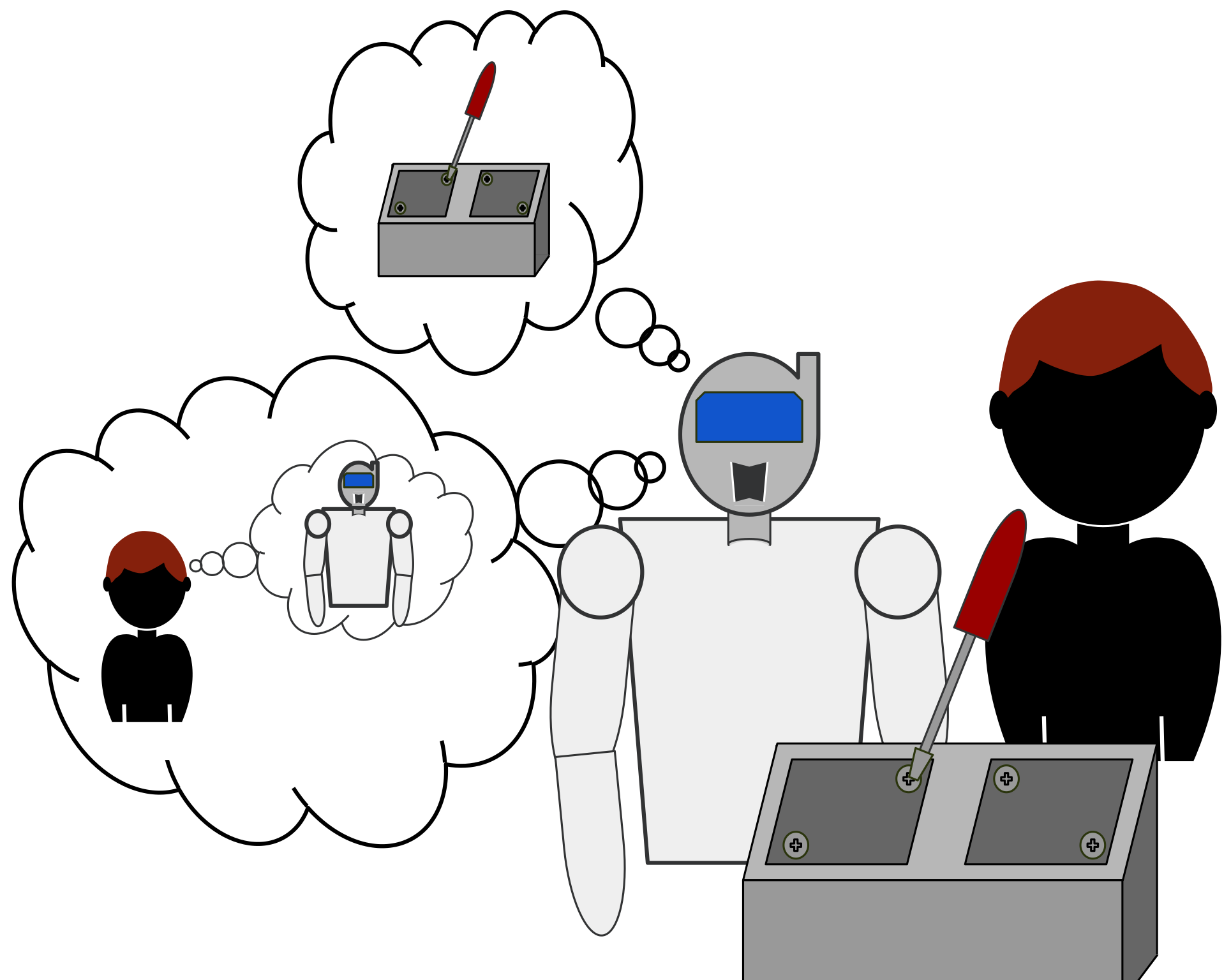

Using Theory of Mind to Improve How Robots Learn from Human Teachers

My research investigates how principles from social cognition (namely our Theory of Mind and our cognitive biases and heuristics) can inform the ways robots infer a person's beliefs about other agents—whether human, robot, or otherwise. Through these investigations, I aim to endow artificial agents with models of human cognition that make interactions with them fluid, intuitive, and all together more helpful for people.

Questions can be directed to Pat Callaghan.

Fostering Intelligent Social Support Networks

Social support is the perception or experience that one is loved and cared for by others, esteemed and valued, and part of a network of mutual assistance and obligations. Social support networks are linked to positive health outcomes and overall quality of life for older adults. Retirement, relocation, the loss of friends, and declines in cognitive and motor functions can create barriers for older adults, making it challenging for them to participate in social activities and form meaningful connections that foster robust social support networks. AI-related technologies offer promising opportunities to support social interactions for older adults and their support networks by reducing barriers to social engagement. As part of the AI-CARING project, I adopt an interdisciplinary and collaborative approach to explore the question: “How can we design AI assistance that fosters intelligent social support networks?”

Questions can be directed to Pragathi.

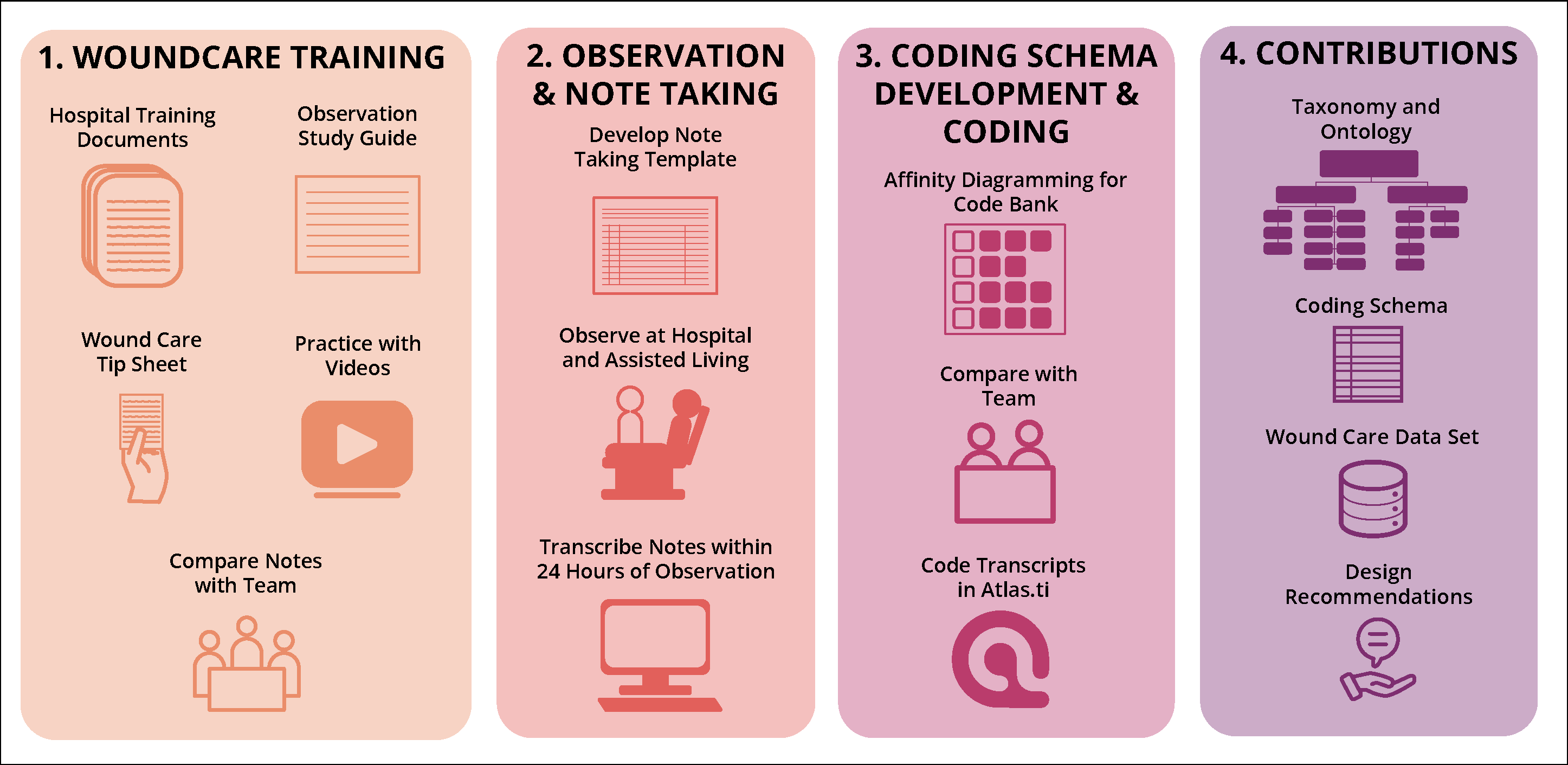

Understanding Wound Care Robotic Design Needs

As the global population ages, both the physical and financial impacts of these wounds are expected to increase. Compounding this issue is the growing national nursing shortage, which directly affects the quality of care for older adults. While assistive robots have shown promise in various healthcare applications, their potential in wound care remains largely unexplored. A critical gap exists in understanding wound care from a human-centered robotics perspective, which is essential for developing effective assistive technologies in this domain.

Questions can be directed to Zulekha, Annika and Ellen.

Shared Autonomy in Interactive Imitation Learning

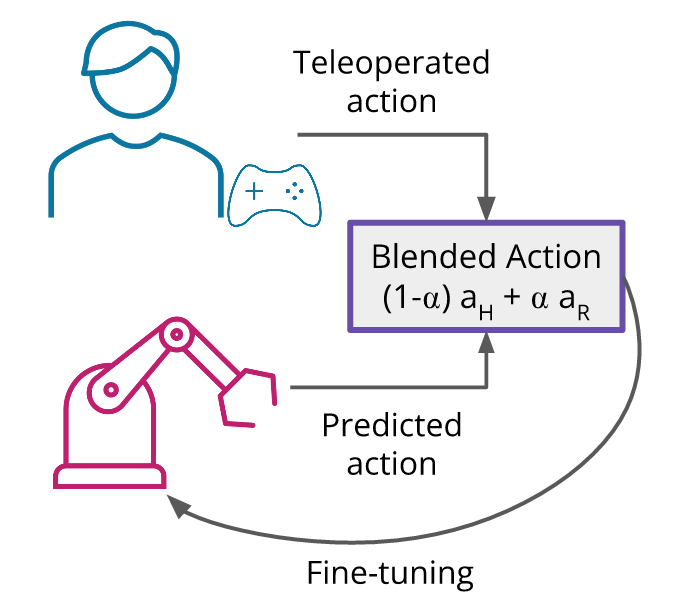

Interactive imitation learning is often used to fine-tune robot policies, especially for manipulation tasks. In this paradigm, control is transferred to a person to demonstrate part of the task whenever the robot has high uncertainty (robot-gated) or the person decides to intervene (human-gated). However, when control is fully handed back to a human demonstrator, it can be cognitively demanding for them to teleoperate a manipulator and their demonstrations might not always be optimal. We're investigating whether blended shared control -- where the user's teleoperated actions and the robot's predicted actions are combined -- can reduce this burden while still providing a valuable training signal for improving manipulation policies.

Questions can be directed to Cailyn. ***

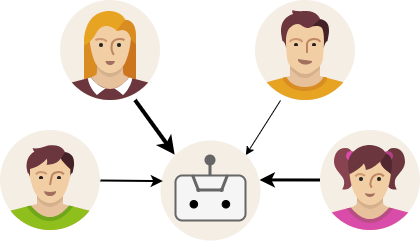

Using Theory of Mind to Learn from Multiple Teachers

Household robots learn from the family much more informally compared to a laboratory. Instead of skilled professionals teaching towards a well defined task preformance, in a family there are many sub-optimal teachers, each with their own bias and all with no central cirriculum. This work uses Theory of Mind to allow the robot to bridge this gap.

Questions can be directed to Jack.